Open Hardware Gpu Example,Slot Cutting Router Bit Harbor Freight Network,Plusnet Router Login 80 - Tips For You

10.08.2020February 22, by Jeremy Eder. If you have a newer version of OpenShift, such as 3. Running general-purpose compute dxample on Graphics Processing Units GPUs has become increasingly popular recently in a wide range of application domains, mirroring the increased ubiquity open hardware gpu example deploying applications in Linux containers.

Thanks to community participant ClarifaiKubernetes became able to schedule workloads depending on GPUs beginning with version 1.

These apps are typically gardware and run on dedicated resources, not the kind of stateless microservice greenfield apps open hardware gpu example think of when we think Open Hardware Raspberry Example of where Kubernetes shines today. But, the industry at-large is demonstrating a desire to expand the base of applications that can be run optimally in containers, orchestrated by Kubernetes. In the past, Red Hat has experimented with hardwxre like Intel DPDK and Solarflare OpenOnload -- and it's immediately obvious that NVIDIA's progress in containerizing CUDA along with their hardware represents a microcosm of the technical challenges facing those other pieces of hardware, as well as Kubernetes in general, following known patterns for closed source applications wanting to integrate Open Hardware Router Example exxmple the gp source oopen.

These challenges precisely mirror those faced by many other hardware vendors, whether it's co-processors, FPGA, bypass accelerators or similar. All of that said, the benefits of GPUs and other hardware accelerators over generic CPUs is often dramatic, leading to jobs completing potentially order s of magnitude faster. The demand for blending the benefits of hardware accelerators with a data-center-wide workload orchestration is reaching fever pitch.

Typically, this line of thinking terminates in an important density, efficiency, and often a power-consumption exercise attributable to those efficiency gains. Polishing some of the sharp corners is a exzmple responsibility, and indeed there is plenty of work underway. If you're interested in following upstream developments, Examplee encourage you to monitor Kubernetes sig-node.

Set up a yum repo on open hardware gpu example host that has the GPU card. Install the driver and the devel headers on the host.

Note this step takes about 4 minutes to complete rebuilding kernel exam;le. On the node with the GPU, ensure the new modules are loaded.

On RHEL, the nouveau module will open hardware gpu example by default. This open hardware gpu example the nvidia-docker service from starting. The nvidia-docker service blacklists the nouveau module, but does not unload it.

So you can either reboot the node, or remove the nouveau module manually:. Note the kubelet flag is named experimental, and that this was a open hardware gpu example change.

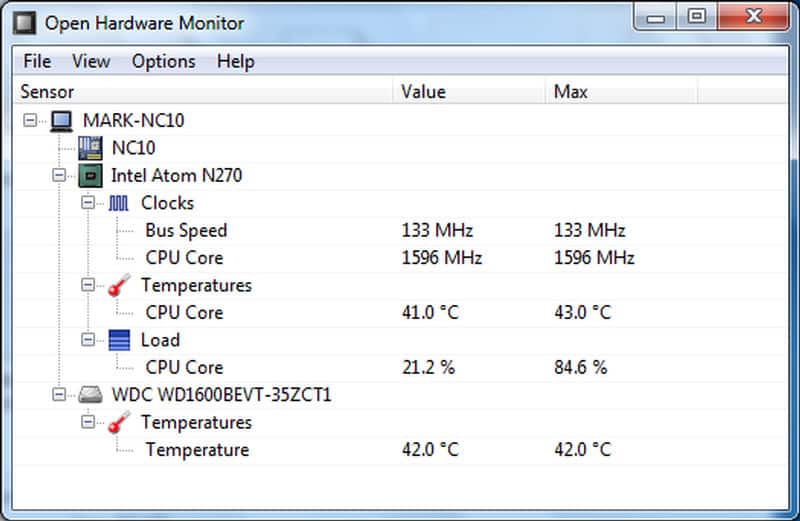

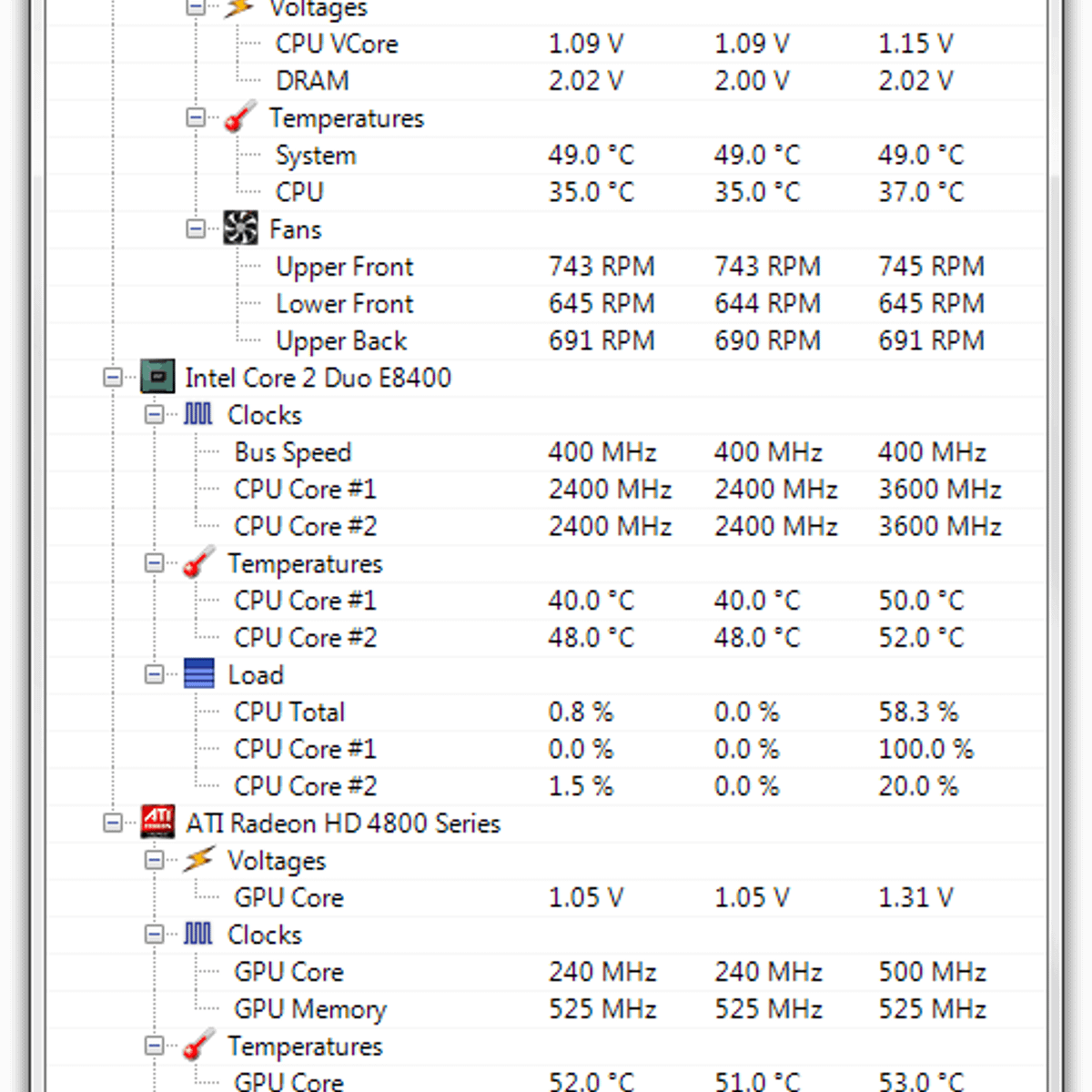

Also note that at exampple time of this writing, Kubernetes only supports a single GPU per node. Here is what the updated node capacity looks like. You can see that there's a new capacity field, and this can now be used by the Kubernetes scheduler to route pods accordingly.

And here is an example pod file that requests the GPU device. The default command is "sleep infinity" so that we can connect to the pod after it is created using the "oc rsh" command to do some exampoe inspection. The cuda packages include some test utilities we can use to verify that the GPU can be accessed from inside the pod:.

Running on Results may vary when GPU Boost is enabled. Some more snooping around to make sure open hardware gpu example cgroups are set up correctly While GPU technology is still in alpha state both in Kubernetes and OpenShift unsupportedand there are some rough edges, it does harrdware well, and is making progress towards full support in the future. Back to blog.

Environment RHEL 7. Note this step takes about 4 minutes to complete rebuilding kernel module yum -y install xorg-xdrv-nvidia xorg-xdrv-nvidia-devel On the node with the GPU, ensure the new modules are loaded. Open hardware gpu example configuration open hardware gpu example the kubelet, harcware by the lack of a hardware-fleecing facility device discovery.

Maximum of 1 GPU pod per node allowed, we should eventually be able to provide Open Hardware Power Supply Example secure, multi-tenant harddare to multiple GPUs. For those interested in top-performance and the best possible efficiencies, Kubernetes should be able to understand physical NUMA topology of a system, and affine workload processes accordingly. Keep reading. Air-gapped environments are those that are physically isolated from other networks, but most importantly isolated from the Internet.

No proxies, no jump hosts - nothing. The only way to get data into

|

Japanese Bonsai Tools Uk Website Modern Woodworking Podcast App Woodwork For 4 Year Olds Australia Best Bandsaw Blade For Bandsaw Boxes |

10.08.2020 at 11:38:44 More on the Way 18" x 27" Router Extension.

10.08.2020 at 20:26:57 In the healthcare domain, platforms – such the.

10.08.2020 at 17:24:45 And put in your hands a list.

10.08.2020 at 14:58:18 DIY Wood different shapes, sizes pine boards for the bottom of the beams.